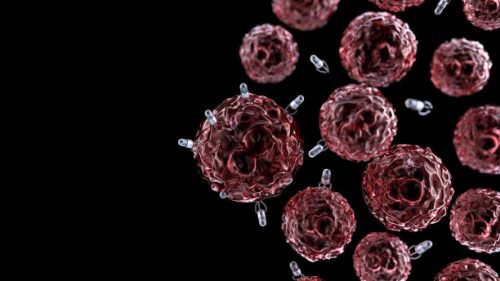

Explainable AI in Nanomedicine: Interpretable Models for Nano–Bio Interactions

Making nanomedicine understandable—one interpretable model at a time.

Skills you will gain:

About Program:

This workshop explores the rapidly growing field of Explainable AI (XAI) in nanomedicine, focusing on how interpretable machine-learning models can unravel complex nano–bio interactions, cellular uptake mechanisms, toxicity patterns, and targeting behaviors. Participants will learn how transparency-driven AI approaches—such as SHAP, LIME, rule-based models, and interpretable neural networks—help scientists understand why nanoparticles behave the way they do inside biological systems. Through structured lectures and conceptual demonstrations, the workshop bridges nanotechnology, data science, and biomedical research to empower evidence-driven, safer, and clinically translatable nanocarrier design.

Aim: To train participants in the principles and applications of Explainable AI for understanding nano–bio interactions and guiding nanomedicine development.

Program Objectives:

Participants will:

- Understand nano–bio interactions, cellular uptake, biodistribution, and toxicity pathways.

- Learn the fundamentals of Explainable AI (XAI) and interpretable machine learning.

- Explore model transparency tools such as SHAP, LIME, and feature importance analysis.

- Interpret AI predictions related to nanoparticle behavior and biological responses.

- Evaluate XAI frameworks for improving safety, efficacy, and regulatory acceptance of nanomedicine.

What you will learn?

Day 1 Foundations of Nano–Bio Interactions & Interpretable ML

- Nano–bio interactions: Protein corona, cellular uptake, biodistribution, toxicity mechanisms

- Need for interpretability in nanomedicine

- Introduction to interpretable ML: Decision trees, logistic regression, rule-based classifiers

- Nanoparticle descriptors (size, charge, shape)

- Tools: Python (Scikit-learn), Jupyter Notebooks

- Mini Task: Build a decision tree model predicting nanoparticle toxicity using key descriptors.

Day 2 Explainable AI Techniques & Interpretation Tools

- Explainable AI (XAI) frameworks: SHAP and LIME

- Feature importance analysis

- Visualizing nanoparticle behavior with XAI

- Tools: SHAP & LIME, Python (SHAP, LIME Libraries) for practical analysis

- Mini Task: Apply SHAP and LIME to a toxicity prediction model for nanoparticles.

Day 3 Applying XAI for Safer & Smarter Nanomedicine

- Refining nanoparticle design: Surface chemistry, size, charge, ligand density

- Case studies on biodistribution, uptake, toxicity predictions

- XAI for regulatory science and risk assessment

- Tools: PyMOL, Chimera , XAI frameworks (SHAP, LIME)

- Mini Task: Create a report interpreting a nanoparticle model using SHAP and LIME.

Mentor Profile

Fee Plan

Get an e-Certificate of Participation!

Intended For :

- UG & PG students in Biotechnology, Nanotechnology, Pharmacy, Biomedical Sciences, AI/ML

- PhD scholars focusing on nanomedicine, drug delivery, ML, toxicology, or materials science

- Academicians integrating AI with nanotechnology or biomedical research

- Industry professionals from pharma, biotech, diagnostics, data science, and regulatory domains

Career Supporting Skills

Program Outcomes

By the end of the workshop, participants will be able to:

- Explain the role of XAI in scientific decision-making in nanomedicine.

- Apply interpretability concepts to understanding nanoparticle datasets.

- Identify key nanoparticle descriptors influencing biological outcomes.

- Interpret model outputs related to toxicity, uptake, and biodistribution.

- Evaluate how XAI strengthens trust, reproducibility, and clinical translation in nanomedicine.